Research Overview

Decision making

People frequently make decisions in uncertain environments, such as investing in the stock market, choosing a healthcare plan, or deciding whether to take an umbrella based on an uncertain weather forecast. To examine how cognitive processes shape decision making under uncertainty, we developed the Florida-And-Georgia (FLAG) gambling task. During the FLAG task, participants chose between a sure-thing offer and a gamble with unknown outcomes. With computational modeling, we showed that young adult participants undervalued large gains but overvalued large losses, consistent with Prospect Theory (see figure on the right). Participants also focused more on recent outcomes, showing a recency bias. The FLAG task offers a valuable tool for probing how learning and memory interact with features of Prospect Theory to guide decisions. Using the FLAG task, ongoing research attempts to delineate the cognitive mechanisms of decision deficits in aging.

Artificial agents

As our society enters an age of artificial intelligence, our social realm also expands to encompass intelligent machines and algorithms with increasingly human-like qualities. This growing “social circle” calls for research to extend focus from humans to artificial agents, such as social robots.

Social robots that highly resemble humans tend to elicit human observers to attribute human characteristics, such as minds, to them (e.g., anthropomorphism). Nevertheless, the very human resemblance can also elicit a sense of mistrust, unease, and eeriness, a phenomenon known as the "uncanny valley."

My past work links the uncanny valley phenomenon to a hypothesized two-stage process, an initial anthropomorphism stage, characterized by the over-attribution of minds to robots, followed by a later correcting stage of dehumanizing the anthropomorphized robots.

To test this hypothesis, we presented human, android, and mechanical-looking robot faces at different exposure times—100ms, 500ms, and 1000ms—and asked participants to rate faces' perceived animacy (as a proxy for mind attribution). We showed that perceived animacy varied as a function of both exposure time and face type. For android faces, animacy ratings initially appeared high but decreased over time. In contrast, animacy ratings were consistently high for human faces and consistently low for mechanical faces (see figure on the right).

Deepfakes

AI-generated deepfakes are increasingly prevalent, overcoming the uncanny valley but introducing new deception risks, especially for older adults with lower digital literacy.

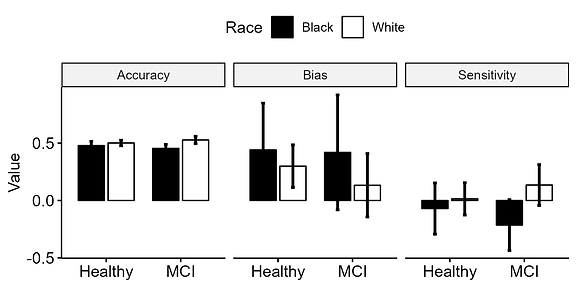

Our ongoing research explores why deepfakes are difficult to detect, how detection abilities change with age, and their impact on trust decisions. Preliminary findings suggest that older adults detect deepfakes at chance level, with Black participants showing greater vulnerability (see figure on the right).